In the evolving landscape of pharmaceutical science, artificial intelligence stands as a powerful companion to traditional medicinal chemistry, biology, and pharmacology. The fusion of data science with experimental biology has given rise to AI-enhanced drug discovery processes that aim to accelerate identification of promising targets, optimize the design of molecules with desired properties, and predict safety and efficacy before costly clinical trials begin. This transformation is not a mere acceleration of existing workflows; it represents a fundamental shift in how scientists reason about complex biological systems, how they handle uncertainty, and how they prioritize experiments in the era of big data. At its core, AI in drug discovery is about turning heterogeneous streams of information—genomic data, chemical structures, assay results, clinical observations, electronic health records, and even literature trends—into coherent, actionable knowledge that guides research decisions with a level of rigor and speed that would be impossible through intuition alone. By blending statistical inference, machine learning, and mechanistic modeling, researchers can create iterative cycles where hypotheses are rapidly generated, tested, and refined across in silico simulations and wet-lab validations, all while preserving a rigorous focus on safety, regulatory alignment, and patient relevance.

Foundations of AI in Drug Discovery

To appreciate the promise of AI-enhanced drug discovery, one must first understand the foundational principles that govern how data-driven approaches intersect with biological complexity. The field relies on representing chemical structures and biological targets in a computationally tractable form, often using graph-based abstractions where molecules appear as nodes connected by bonds, and proteins are modeled through sequence patterns or structural motifs. This representation enables algorithms to learn relationships between molecular features and observed outcomes, such as binding affinity, selectivity, or metabolic stability. A crucial aspect of these foundations is the recognition that drug discovery is a multiscale enterprise, spanning atomic interactions, molecular conformations, cellular signaling networks, organism-level physiology, and patient-specific responses. AI systems that confront this multiscale character adopt modular architectures or hybrid models that combine data-driven components with domain knowledge, yielding predictions that are both statistically robust and pharmacologically interpretable. The moral of these foundations is that AI is most effective when it respects the constraints and priorities of experimental biology rather than replacing them entirely. In practice, this means building models that can incorporate prior information, quantify uncertainty, and adapt to new data as they become available, all without sacrificing traceability or explainability for critical decision makers who rely on these insights in a regulated environment.

Another cornerstone is the emphasis on data quality and provenance. AI models prosper when trained on diverse, well-curated datasets that cover a broad spectrum of chemical space, biological targets, and assay modalities. The realities of drug discovery include noisy measurements, batch effects, and biases introduced by assay design or sample selection. Therefore, robust AI systems implement calibration routines, cross-validation strategies, and external validation on independent cohorts to ensure that predictions generalize beyond the specific datasets used for training. This discipline of careful data stewardship also extends to documentation and reproducibility, because regulators, funders, and collaborators increasingly require transparent audit trails that explain how a model arrived at a given decision. The foundations of AI-driven discovery thus rest on a balance between mathematical sophistication and practical discipline, enabling researchers to harness computational power without losing sight of experimental feasibility and scientific plausibility.

Data Landscape and Integration

The data landscape in AI-enhanced drug discovery is vast and heterogeneous, encompassing chemical structures, biological assays, omics measurements, and real-world patient data. Each data type offers unique insights and presents specific challenges. Structural data, including 3D conformations and docking scores, provides a direct link to molecular interactions with biological targets but often lacks context about dynamic behavior in living systems. Assay data, derived from high-throughput screening and functional readouts, captures biological activity but can be noisy and uneven across experimental conditions. Omics data, such as genomics, transcriptomics, proteomics, and metabolomics, reveals system-wide responses to perturbations, yet these datasets are frequently high-dimensional and prone to batch effects. Real-world data from electronic health records, pharmacovigilance databases, and literature mining adds pragmatic value by reflecting actual clinical outcomes, but it also introduces issues of data privacy, heterogeneity in coding practices, and missing information. The integration of these diverse sources requires careful consideration of data schemas, ontologies, and alignment strategies that preserve biological meaning while enabling machine learning models to operate efficiently. Effective data integration also demands attention to provenance, versioning, and reproducibility so that researchers can trace back every prediction to the corresponding data lineage and transformation steps.

In practice, successful AI-enabled discovery pipelines begin with a data fabric that harmonizes disparate streams into a coherent analytical space. Data preprocessing steps remove artifacts, normalize scales, and correct systematic biases, creating a foundation upon which models can learn robust patterns. Feature engineering, when performed with domain awareness, translates raw measurements into descriptors that capture chemically and biologically meaningful information. For molecules, such descriptors might include topological indices, physicochemical properties, and learned embeddings from graph neural networks. For biological systems, features can reflect pathway involvement, gene expression signatures, or network connectivity. The integration of multi-modal data, where chemical, biological, and clinical signals are combined, empowers models to capture complex causal relationships and to forecast outcomes that hinge on interactions across different biological layers. Ultimately, the data landscape strategy must align with the intended decision points in the discovery workflow, ensuring that the right data feeds the right models at the right time to inform target selection, lead optimization, or translational assessment.

Generative Models and Molecular Design

Generative models have emerged as a transformative tool in the design of new chemical entities. Rather than screening existing libraries, researchers leverage neural networks to explore vast swaths of chemical space and propose novel structures with desired properties. Techniques such as variational autoencoders, generative adversarial networks, and reinforcement learning-based design enable the creation of molecules that optimize binding affinity, selectivity, synthetic accessibility, and pharmacokinetic profiles. The allure of generative design lies in its ability to propose diverse scaffolds that may not be represented in current libraries, thereby accelerating the discovery of innovative chemotypes. However, this power comes with responsibilities: ensuring that generated compounds are chemically valid, synthesizable, and likely to behave safely in biological systems. To address these concerns, designers frequently couple generative models with predictive checks for toxicity, metabolism, and off-target effects, and they may incorporate constraints that reflect practical laboratory capabilities and regulatory expectations. As generative methods mature, they increasingly support human-in-the-loop workflows where medicinal chemists guide the design space with domain expertise while the AI handles scalable exploration and ranking of candidates.

Moreover, the integration of synthetic planning tools with AI-driven design allows an end-to-end pipeline where novel molecules are not only imagined but also linked to practical synthetic routes. This end-to-end capability reduces the disconnect between theoretical novelty and experimental feasibility, enabling faster iteration cycles. The success of generative design hinges on careful validation: predicted properties must be confirmed experimentally, and the feedback from experiments must be fed back into the model to refine its understanding of structure-activity landscapes. In environments with high stakes, such as late-stage development, human oversight remains essential to interpret model outputs, assess risk, and ensure alignment with strategic goals and regulatory constraints. Through iterative refinement, generative design can broaden the chemical repertoire available for exploration while maintaining a disciplined focus on safety and practicality.

Graph-Based Representations and Deep Learning

Graph-based representations have become a cornerstone in AI-driven chemistry due to their natural fit for describing molecular structures and complex biological networks. Graph neural networks propagate information across nodes and edges to learn embeddings that capture local and global structure, enabling accurate predictions of properties such as solubility, permeability, and target binding profiles. In the context of protein targets, graph-based approaches can model residue interactions, allosteric sites, and conformational variability, providing a flexible framework for understanding how small molecules interface with dynamic proteins. The strength of graph-based methods lies in their ability to generalize across diverse chemical spaces and to incorporate relational information that traditional descriptor-based methods might overlook. When combined with attention mechanisms and multi-task learning, these models can simultaneously address multiple objectives, such as potency, selectivity, and ADMET characteristics, thereby informing lead prioritization with a holistic view of trade-offs.

Beyond molecular graphs, network biology offers a broader canvas where graph representations illuminate the architecture of signaling pathways, gene regulatory networks, and metabolic cascades. AI models that integrate network topology with molecular data can identify targets whose perturbation yields favorable phenotypic outcomes while minimizing unintended consequences. This systems-level perspective is particularly valuable for understanding polypharmacology, where a drug interacts with multiple targets to produce therapeutic effects. By mapping how perturbations propagate through networks, researchers can anticipate synergy or adverse effects and adjust design strategies accordingly. The convergence of graph learning with mechanistic insights thus supports a more nuanced approach to drug discovery that respects the interconnected nature of biology and the finite resources available for experimentation.

Virtual Screening and Docking with AI

AI-assisted virtual screening and docking are active areas where computational efficiency and predictive accuracy translate into tangible time savings in the lab. Traditional docking methods estimate how well a molecule fits into a protein's binding pocket, but they can be computationally intensive and sometimes miss nuanced interactions. Machine learning models augment docking by learning from historical docking results, experimental affinities, and structural features to re-rank candidate compounds or predict binding probabilities with higher throughput. These approaches can filter enormous compound libraries down to a feasible set for experimental testing, enabling researchers to prioritize experiments that have the highest expected value. A common strategy involves using fast, approximate predictive models to screen billions of molecules and then applying more rigorous, physics-based simulations to the top-ranked subset. This staged approach balances speed and accuracy, ensuring that computational resources are allocated where they yield the most information.

Nevertheless, the real-world success of AI-enhanced virtual screening depends on the quality of the target structure data and the representativeness of the training sets. When protein structures are uncertain or when allosteric sites are cryptic, AI models must incorporate uncertainty quantification and leverage alternative data modalities, such as cryo-EM density maps or comparative modeling, to maintain reliability. Moreover, predictive uncertainty must be communicated clearly to decision makers, who rely on these outputs to allocate budgets and set milestones. In practice, robust AI-driven docking workflows couple rapid screening with rigorous validation experiments, building a feedback loop in which experimental outcomes continually refine the screening model's predictive accuracy and its confidence estimates. Through this iterative process, virtual screening becomes a strategic accelerator rather than a black-box gatekeeper, enabling researchers to explore novel chemical spaces with a disciplined, evidence-based approach.

Multi-Objective Optimization and Lead Tuning

Drug discovery inherently involves balancing multiple, often competing objectives. A promising lead should exhibit potency against the target while showing favorable pharmacokinetic properties, acceptable safety margins, and feasible synthesis. AI enables multi-objective optimization by constructing models that simultaneously consider several criteria and produce Pareto-efficient solutions. This approach helps researchers navigate trade-offs, such as whether to prioritize metabolic stability over potency or to accept a longer synthetic route in exchange for improved safety profiles. Techniques such as multi-task learning, Bayesian optimization, and evolutionary strategies provide a principled framework for exploring the design space, identifying regions that maximize overall therapeutic potential while minimizing risk. Importantly, multi-objective optimization is complemented by uncertainty quantification, which reveals where the model is confident and where additional data could meaningfully reduce risk. In practice, decision makers can examine trade-off curves, interpret which structural features drive improvements in specific properties, and select candidate molecules that align with regulatory and manufacturing constraints.

As leads progress through optimization cycles, AI assists in prioritizing synthetic feasibility, cost considerations, and supply chain reliability. Predictive models can estimate synthetic complexity, potential bottlenecks, and the availability of starting materials, guiding chemists toward designs that balance innovation with practicality. This holistic view of lead tuning helps shorten development timelines, lower costs, and maintain alignment with the broader program strategy. The goal is to create a feedback-rich environment where experiments inform models, models guide design choices, and design choices are continually validated against real-world outcomes, ultimately yielding candidates with robust therapeutic potential ready for preclinical evaluation.

De Novo Design and Pharmacokinetics

De novo design is a frontier in which AI-driven systems propose entirely new chemical structures tailored to specific biological goals and pharmacokinetic targets. By enforcing constraints related to molecular weight, lipophilicity, polar surface area, and synthetic accessibility, these systems strive to produce candidates with a higher likelihood of success in later development stages. Successful de novo design requires a careful balance between novelty and tractability: novel molecules may offer fresh mechanisms of action, but they must be realistically synthesizable and biologically meaningful. Advances in representation learning, reinforcement learning, and optimization under constraints empower AI to explore inventive chemistries while respecting the practicalities of medicinal chemistry and manufacturing. Pharmacokinetic considerations, including absorption, distribution, metabolism, and excretion, are integrated into the design loop to foresee how a molecule will behave in vivo, informing structural choices that influence bioavailability, half-life, and clearance. The integration of ADME models with generative design yields candidates with a more favorable pharmacokinetic fingerprint, reducing the likelihood of late-stage attrition due to unforeseen liabilities.

At the intersection of de novo design and pharmacokinetics lies a robust paradigm for predictive safety. AI models estimate potential toxicity signals early in the design process, including hepatotoxicity, cardiotoxicity, and off-target effects, enabling preemptive screening before compounds advance to costly experiments. Safety-centric constraints are embedded into optimization objectives to steer the generation process toward chemically sound, risk-aware candidates. While this approach does not eliminate the need for empirical testing, it shifts the discovery trajectory toward a higher-quality pool of candidates, where each molecule carries a more favorable balance of efficacy, safety, and manufacturability. In real-world pipelines, de novo design is often combined with scaffold-hopping strategies, libraries that emphasize diversity, and medicinal chemistry heuristics to maximize the probability that a new molecule will satisfy regulatory expectations and clinical viability.

AI for Predictive ADME/Tox

Predictive models of absorption, distribution, metabolism, excretion, and toxicity (ADME/Tox) are indispensable for forecasting how a compound will perform in humans. AI approaches in this domain combine structural descriptors with biological context to estimate properties such as oral bioavailability, blood-brain barrier penetration, hepatocyte metabolism rates, and potential liabilities associated with reactive metabolites. These predictions help researchers triage compounds early, avoiding investments in molecules likely to fail due to pharmacokinetic or safety concerns. The models gain reliability from training on diverse datasets that capture inter-individual variability, species differences, and methodological heterogeneity across laboratories. Uncertainty quantification plays a crucial role here: when predictions carry high uncertainty, researchers may opt to gather additional data, refine models, or adjust design strategies. The end result is an integrated view of how a molecule will behave in the body, guiding decisions on lead selection, dosing considerations, and the design of later-stage experiments that probe human-relevant pharmacology.

Beyond conventional ADME/Tox endpoints, AI systems increasingly capture nuanced safety signals such as idiosyncratic reactions, immune-mediated effects, and off-target receptor interactions that may contribute to adverse events. By learning from historical safety datasets and post-market surveillance, models can highlight structural motifs associated with risk and suggest mitigation strategies through chemical modification or alternate scaffolds. The ultimate aim is to deliver a more predictable development path, where the probability of success in preclinical and early clinical phases is enhanced by proactive risk assessment, data-driven design, and continuous learning from new evidence collected during trials and post-authorization monitoring.

Experimental Design and Active Learning

AI enhances experimental design by suggesting which assays to run, which concentrations to test, and how to allocate resources most efficiently. Active learning frameworks identify data points that would be most informative for improving model performance, thereby accelerating discovery with fewer experiments. This capability is particularly valuable in early stages when data collection is expensive and time-consuming. By prioritizing experiments that maximize information gain, researchers can refine predictive models faster, reduce uncertainty around key properties, and converge on high-potential candidates more quickly than through random or sequential testing alone. The active learning loop typically involves iterative cycles: proposing experiments, conducting them in the lab, updating models with new results, and re-evaluating the design space. The interplay between AI and experimentation cultivates a dynamic, evidence-driven culture in which every experimental choice is aligned with a clear informational objective and an anticipated impact on downstream decisions.

In practice, experimental design also addresses practical considerations such as assay robustness, throughput capabilities, and cost constraints. AI systems may suggest alternative assay formats, multiplex strategies, or parallel testing plans that maximize information per dollar spent. They can also forecast potential bottlenecks in the measurement process, enabling teams to preemptively allocate resources or adjust schedules. This holistic approach to experimentation fosters a synergy between computational predictions and laboratory reality, ensuring that AI-driven recommendations remain grounded in operational feasibility while still expanding the frontier of what is testable and observable in a given program.

Automation and Robotics in the Laboratory

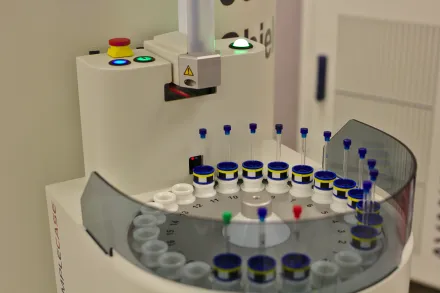

Automation and robotics extend the reach of AI by translating computational insights into tangible laboratory actions. Automated liquid handling, high-throughput screening platforms, and automated synthesis systems enable rapid, standardized execution of experiments with minimal human intervention. When guided by AI decision support, robots can execute optimized experimental plans, collect high-quality data, and feed results back into learning systems in near real time. This integration reduces variability that can arise from manual processes, increases replicability, and accelerates the pace of discovery. The collaboration between AI and automation is not about replacing scientists but about freeing them from repetitive tasks, allowing them to focus on higher-order reasoning, experimental interpretation, and the creative aspects of medicinal chemistry that require human judgment and imagination.

Nevertheless, automation introduces considerations regarding data integrity, calibration, and maintenance. AI systems must account for potential systematic errors introduced by instruments or plate effects, and protocols should include mechanisms for quality control, error detection, and traceability. The most successful deployments of automated AI-enabled labs feature human-in-the-loop governance, where researchers oversee critical decisions, validate automated results, and orchestrate complex workflows across multiple platforms. In such ecosystems, AI provides scalable decision support, robotics perform precise, repeatable actions, and scientists interpret outcomes within a rigorous framework that balances speed with reliability and safety.

Regulatory Considerations and Validation

The regulatory landscape for AI-enhanced drug discovery is evolving, reflecting the growing integration of computational methods into the pharmaceutical development pipeline. Regulators emphasize model transparency, data provenance, validation rigor, and the ability to justify decisions made by AI systems that influence critical development steps. Validation strategies often involve external benchmarking, prospective studies, and rigorous cross-site reproducibility assessments. It is essential that AI tools provide interpretable outputs, quantify uncertainty, and document the limitations of their predictions. The goal is not to cloak technology in mystery but to provide regulators and stakeholders with a clear understanding of how models were trained, what data were used, how predictions should be interpreted, and how risk is managed throughout the discovery process. Transparent documentation supports trust, enables independent verification, and facilitates smoother transitions from discovery to preclinical validation and, where appropriate, clinical investigation.

Regulatory science increasingly recognizes the value of human-AI collaboration, where expert judgment remains central while AI offers scalable, quantitative guidance. This partnership entails well-defined governance structures, risk assessments, and testing plans that align with both scientific objectives and regulatory expectations. Companies pursuing AI-enabled discovery often adopt preclinical evidence generation strategies that emphasize reproducibility, data integrity, and model auditing. By demonstrating that AI contributions are evidence-based, auditable, and consistent with established pharmacology principles, teams can build a compelling case for the reliability of their computational predictions in the broader drug development program.

Data Privacy, Reproducibility, and Ethics

As AI-driven drug discovery increasingly depends on vast datasets that include clinical and patient-derived information, concerns about data privacy and ethical stewardship become central. Responsible use requires adherence to privacy-preserving techniques, consent frameworks, and secure data handling practices that protect individual identities while enabling scientific advancement. Reproducibility is another pillar, demanding rigorous versioning of datasets, models, and code, along with standardized evaluation metrics and well-documented experimental setups. When multiple organizations collaborate, governance agreements and interoperability standards help ensure that data sharing does not compromise proprietary information, patient confidentiality, or competitive integrity. Ethics in AI-driven discovery also extends to questions about bias, equitable access to resulting therapies, and the societal implications of accelerated drug development. Teams must consider how predictive models could influence decision-making in ways that affect patient outcomes and public health, striving for responsible innovation that benefits a broad population without overlooking vulnerable groups.

The ethical and privacy dimensions intersect with methodological choices. For instance, the selection of training data, the handling of missing values, and the design of objective functions may shape model behavior in subtle ways that require careful scrutiny. Forward-looking risk assessments and impact analyses can help teams anticipate unintended consequences and implement safeguards, such as bias mitigation strategies or human oversight for high-stakes predictions. In this ecosystem, AI is best viewed as a set of tools that extend human capability while remaining subject to the same standards of accountability, quality, and societal responsibility that govern all medical science.

Collaboration Between Industry and Academia

Advances in AI-enabled drug discovery have thrived on collaboration across sectors, bringing together the strengths of industry-scale data, rigorous regulatory experience, and academic innovation. Industrial programs contribute vast datasets, access to diverse biological targets, and robust translational pipelines, while academic groups offer theoretical advances, novel algorithms, and a culture of open inquiry that accelerates methodological breakthroughs. Successful collaborations embrace shared challenges such as data standardization, reproducible research practices, and clear expectations for intellectual property and publication. In practice, partnerships often involve joint consortia, cloud-based platforms for data and model sharing, and joint training programs that cultivate talent with interdisciplinary fluency in biology, chemistry, and data science. The collaborative spirit fuels a virtuous cycle: new scientific insights lead to better models, improved experimental designs generate richer data, and validated discoveries feed back into model refinement, creating a sustainable driver for innovation in drug discovery.

Such collaborations also address practical constraints, including the need to harmonize regulatory expectations across jurisdictions and to align on safety assessment frameworks that are appropriate for AI-informed decision making. They encourage the development of shared benchmarks, standardized reporting practices, and transparent evaluation criteria that enable independent validation and cross-institution learning. As the field matures, collaborative ecosystems may evolve into platform-like arrangements that democratize access to sophisticated AI tools, enabling smaller biotech startups and academic labs to compete more effectively by leveraging collective intelligence and shared infrastructure. The outcome is a more interconnected, resilient research community that can tackle complex diseases with greater speed, precision, and societal relevance.

Case Studies and Real-World Outcomes

Across the pharmaceutical industry, several case studies illustrate how AI-enhanced discovery pipelines can yield tangible benefits. In some programs, AI-driven molecular design has produced lead candidates with optimized potency and improved pharmacokinetic profiles after a fraction of the traditional development cycles. In others, predictive models have allowed teams to deprioritize entire libraries or alternative targets that would have consumed substantial resources, thereby freeing capacity for higher-potential avenues. Case studies also highlight the role of AI in accelerating repurposing efforts, where existing compounds are evaluated against new indications using multi-criteria optimization and mechanistic reasoning to reveal unexpected therapeutic opportunities. While not every project meets its ambitious goals, the cumulative experience demonstrates that AI can reduce uncertainty, shorten development timelines, and improve the efficiency of resource allocation when integrated thoughtfully into the discovery continuum.

Critically, real-world outcomes depend on disciplined data practices, robust validation, and ongoing governance. Companies that realize sustained benefits tend to adopt living models of validation that incorporate new data as it becomes available, maintain clear documentation of model performance over time, and implement decision workflows that preserve human oversight for high-stakes judgments. Importantly, even the most advanced AI systems cannot fully replace experimental confirmation; rather, they complement and accelerate the empirical process by guiding where to look, what to test, and how to interpret results in a scientifically rigorous way. The lessons from these case studies emphasize the importance of interpretability, risk management, and alignment with patient-centered objectives as essential ingredients for long-term success in AI-enhanced drug discovery.

Future Prospects and Challenges

Looking ahead, the trajectory of AI-enhanced drug discovery is marked by increasing sophistication in models, richer data ecosystems, and deeper integration with clinical development. Innovations such as few-shot learning, causal inference, and physics-informed machine learning promise to extend predictive capabilities to novel targets and unconventional chemistries with fewer data points. The expansion of multi-omics integration and longitudinal patient data can yield more nuanced insights into disease mechanisms and drug responses, potentially enabling truly personalized pharmacology in the future. Yet, several challenges remain. Data sharing and interoperability continue to be hurdles, as does the need for standardized benchmarks that enable fair comparisons of models across different contexts. The opacity of certain AI models can hinder regulatory trust, requiring continued emphasis on explanation, auditability, and robust validation paradigms. Additionally, the complexity of biological systems means that even the most powerful algorithms can be confounded by unmeasured variables or rare events, underscoring the ongoing need for human expertise to interpret results and make critical decisions in the face of uncertainty.

Ethical and societal considerations will shape the adoption of AI in drug discovery over the coming years. Questions about access to AI-driven tools, equitable distribution of resulting therapies, and the potential for unintended biases in training data will demand deliberate governance and inclusive stakeholder engagement. As AI becomes more embedded in the discovery process, the pharmaceutical industry may also explore new business models that prioritize collaboration, transparency, and shared risk. By fostering environments that reward rigorous science, reproducibility, and patient-centric outcomes, the field can harness AI to deliver safer, more effective medicines faster while upholding the highest standards of integrity and social responsibility. The ongoing evolution of AI-enhanced drug discovery thus holds great promise, contingent on disciplined science, thoughtful governance, and a steadfast commitment to improving human health through responsible innovation.